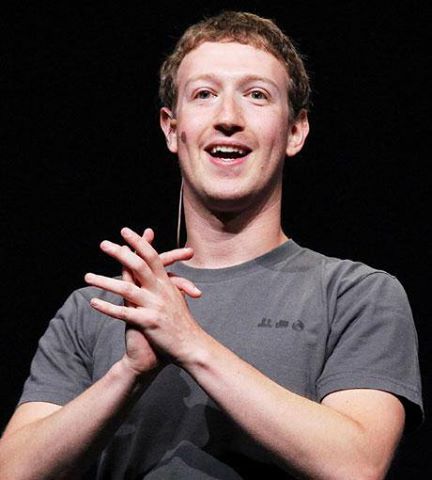

World News Actuality Claire Evren & CEO of Facebook Mark Zuckerberg

World News Actuality Claire Evren & CEO of Facebook Mark Zuckerberg

« I spend most of my time thinking about how to connect the world and serve our community better, but a lot of that time isn’t in our office or meeting with people or doing what you’d call real work. »

« I take a lot of time just to read and think about things by myself, » he added. « If you count the time I’m in the office, it’s probably no more than 50-60 hours a week. But if you count all the time I’m focused on our mission, that’s basically my whole life. »

- World News Actuality Presented By Claire Evren

«For 2018, my personal challenge has been to focus on addressing some of the most important issues facing our community — whether that’s preventing election interference, stopping the spread of hate speech and misinformation, making sure people have control of their information, and ensuring our services improve people’s well-being. In each of these areas, I’m proud of the progress we’ve made. »

« We’re a very different company today than we were in 2016, or even a year ago. We’ve fundamentally altered our DNA to focus more on preventing harm in all our services, and we’ve systematically shifted a large portion of our company to work on preventing harm. We now have more than 30,000 people working on safety and invest billions of dollars in security yearly. »

« To be clear, addressing these issues is more than a one-year challenge. But in each of the areas I mentioned, we’ve now established multi-year plans to overhaul our systems and we’re well into executing those roadmaps. In the past we didn’t focus as much on these issues as we needed to, but we’re now much more proactive. »

« That doesn’t mean we’ll catch every bad actor or piece of bad content, or that people won’t find more examples of past mistakes before we improved our systems. For some of these issues, like election interference or harmful speech, the problems can never fully be solved. They’re challenges against sophisticated adversaries and human nature where we must constantly work to stay ahead. But overall, we’ve built some of the most advanced systems in the world for identifying and resolving these issues, and we will keep improving over the coming years. »

« We’ve made a lot of improvements and changes this year, and here are some of the most important ones: »

« For preventing election interference, we’ve improved our systems for identifying the fake accounts and coordinated information campaigns that account for much of the interference — now removing millions of fake accounts every day. We’ve partnered with fact-checkers in countries around the world to identify misinformation and reduce its distribution. We’ve created a new standard for advertising transparency where anyone can now see all the ads an advertiser is running to different audiences. We established an independent election research commission to study threats and our systems to address them. And we’ve partnered with governments and law enforcement around the world to prepare for elections. »

« For stopping the spread of harmful content, we’ve built AI systems to automatically identify and remove content related to terrorism, hate speech, and more before anyone even sees it. These systems take down 99% of the terrorist-related content we remove before anyone even reports it, for example. We’ve improved News Feed to promote news from trusted sources. We’re developing systems to automatically reduce the distribution of borderline content, including sensationalism and misinformation. We’ve tripled the size of our content review team to handle more complex cases that AI can’t judge. We’ve built an appeals system for when we get decisions wrong. We’re working to establish an independent body that people can appeal decisions to and that will help decide our policies. We’ve begun issuing transparency reports on our effectiveness in removing harmful content. And we’ve also started working with governments, like in France, to establish effective content regulations for internet platforms. »

« For making sure people have control of their information, we changed our developer platform to reduce the amount of information apps can access — following the major changes we already made back in 2014 to dramatically reduce access that would prevent issues like what we saw with Cambridge Analytica from happening today. We rolled out new controls for GDPR around the whole world and asked everyone to check their privacy settings. We reduced some of the third-party information we use in our ads systems. We started building a Clear History tool that will give people more transparency into their browsing history and let people clear their it from our systems. And we’ve continued developing encrypted and ephemeral messaging and sharing services that we believe will be the foundation for how people communicate going forward. »

« For making sure our services improve people’s well-being, we conducted research that found that when people use the internet to interact with others, that’s associated with all the positive aspects of well-being you’d expect, including greater happiness, health, feeling more connected, and so on. But when you just use the internet to consume content passively, that’s not associated with those same positive effects. Based on this research, we’ve changed our services to encourage meaningful social interactions rather than passive consumption. One change we made reduced the amount of viral videos people watched by 50 million hours a day. In total, these changes intentionally reduced engagement and revenue in the near term, although we believe they’ll help us build a stronger community and business over the long term. »

« If you’re interested in reading more about these changes, I’ve written extensively about our work on elections here (https://www.facebook.com/notes/mark-zuckerberg/preparing-for-elections/10156300047606634/) and content governance and enforcement here (https://www.facebook.com/notes/mark-zuckerberg/a-blueprint-for-content-governance-and-enforcement/10156443129621634/). You can also read about our research on well-being here (https://newsroom.fb.com/…/hard-questions-is-spending-time-…/). »

I’ve learned a lot from focusing on these issues and we still have a lot of work ahead. I’m proud of the progress we’ve made in 2018 and grateful to everyone who has helped us get here — the teams inside Facebook, our partners and the independent researchers and everyone who has given us so much feedback. I’m committed to continuing to make progress on these important issues as we enter the new year. »

« I’m also proud of the rest of the progress we’ve made this year. More than 2 billion people now use one of our services every single day to stay connected with the people who matter most in their lives. Hundreds of millions of people are part of communities they tell us make up their most important social support. People have come together using these tools to raise more than $1 billion for causes and to find more than 1 million new jobs. More than 90 million small businesses use our tools, and more than half say they’ve hired more people because of them. Building community and bringing people together leads to a lot of good, and I’m committed to continuing our progress in these areas as well. »

Here’s to a great new year to come.»

CEOs famously work long hours and have little time for their families. Though Zuckerberg works a much longer week than the average American, he seems to have struck a better work-life balance than most other heads of corporations.

Zuckerberg sets personal challenges for himself, including hunting all the meat that he eats, learning Mandarin and reading a new book every other week.

In the Q&A, Zuckerberg noted that he reads both fiction and nonfiction but “probably” more fact-based books.

As for learning Mandarin, he said the key to learning a language is to “put in the time and let it seep into your mind.”

“Learning a language is extremely humbling because there’s no way to ‘figure it out’ by just being clever,” he noted.

In addition to discussing his work-life balance, Zuckerberg touched on a number of other subjects, including a couple controversial ones.

He also addressed his widely panned $100 million donation to the public school system of Newark, New Jersey, which critics say failed to live up to its promise of making the schools “a symbol of educational excellence for the whole nation.” The donation was also criticized for giving too much of the money to consulting firms.

“A lot of good work has come from that grant,” Zuckerberg said. “The highlight is that the graduation rate has improved by more than 10% since we’re started our program there. The leaders we’ve worked with in NJ have started many new high performing schools, paid teachers more and have improved the schools in lots of other ways.”

- World News Actuality Presented By Claire Evren-Mark Zuckerberg

The social network has vowed to police its users.

Facebook banned 20 organizations and individuals in Myanmar, including a senior military commander, acknowledging that it was “too slow” to prevent the spread of “hate and misinformation” in the country after the United Nations “found evidence that many of these individuals and organizations committed or enabled serious human rights abuses in the country.”

Twitter (TWTR) also began to take more concrete steps to tackle hate speech and harassment on their platform, after years of becoming known as a platform where users sometimes faced sexist and racist attacks.

Twitter announced a new policy prohibiting “dehumanizing speech,” expanding on their hate speech conduct, banning direct attacks or threats of violence based on race, sexual orientation or gender.

Facebook executives say they are working diligently to rid the platform of dangerous posts.

“It’s not our place to correct people’s speech, but we do want to enforce our community standards on our platform. “When you’re in our community, we want to make sure that we’re balancing freedom of expression and safety, that the primary goal was to prevent harm, and that to a great extent, the company had been successful. But perfection, is not possible.

“We have billions of posts every day, we’re identifying more and more potential violations using our technical systems,” “At that scale, even if you’re 99 percent accurate, you’re going to have a lot of mistakes.”

- World News Actuality Presented By Claire Evren

- World News Actuality Presented By Claire Evren

- World News Actuality Presented By Claire Evren Profile of the week CEO of Meta Mark Zuckerberg

The Rules

Moderators are given guides to help them decide.

The Facebook guidelines do not look like a handbook for regulating global politics. They consist of dozens of unorganized PowerPoint presentations and Excel spreadsheets with bureaucratic titles like “Western Balkans Hate Orgs and Figures” and “Credible Violence: Implementation standards.”

Moderators are instructed to remove any post praising, supporting or representing any listed figure.

Countries where Facebook faces government pressure seem to be better covered than those where it does not. Facebook blocks dozens of far-right groups in Germany, where the authorities scrutinize the social network, but only one in neighboring Austria.

- World News Actuality Presented By Claire Evren

- World News Actuality Presented By Claire Evren

- World News Actuality Presented By Claire Evren

- World News Actualuaty Presented By Claire Evren